Iran’s Attack on Israel

Iran’s Attack on Israel

11 min read

The latest neuro-nonsense is part of a larger drive towards reductionism in science.

Imagine that Tom is analysing a work of literature – The Grapes of Wrath, say. He looks at the plot, characterisation, and historical context, and uses various other tools of literary analysis to extract insights from- and perceive meaning in the text. But Paula decides to take a different approach. She examines the type of paper that the book was printed on, and then looks at the ink used, employing gas chromatography to elucidate the chemical makeup of its ingredients. After also measuring the ink’s viscosity and the magnetic properties of semi-glossy paper, Paula tells Tom that the book can be fully understood only through this latter, scientific methodology, and that The Grapes is nothing more than the sum of its parts – the molecular interactions between ink droplets and the cellulose in the paper.

Reductionism can go haywire, reducing all phenomenon to molecules in action.

Science has made great progress in the last three centuries by pressing the cause of reductionism. The idea is that underneath complex phenomena and entities are simpler, more fundamental layers that can be studied in order to fully elucidate the complex conglomerate. For example, biology has benefited by exploiting the reductionist tools of biochemistry - reducing complex biological phenomena to the level of chemistry.

But, as in our literary example above, the process can go haywire. Here is a typical expression of this meshugas, from no less a scientist than Francis Crick. In his book The Astonishing Hypothesis, Crick began with this conjecture:

“...that ‘You’, your joys and your sorrows, your memories and your ambitions, your sense of personal identity and free will, are in fact no more than the behaviour of a vast assembly of nerve cells and their associated molecules.”1

The key phrase here is no more. To Crick, as to the vast majority of evolutionary biologists, there is nothing more to any phenomenon than molecules in motion.

Informed consumers of science need to be aware of the limits of reductionism in science in general, and in biology especially. Brain scans provide a useful context in which to analyse this caveat.

In 1986 Patricia Churchland published Neurophilosophy, arguing that the questions that had been discussed by philosophers over many centuries would be solved once they were rephrased as questions of neuroscience. This was the first major outbreak of a new academic malaise: If philosophy could be replaced by neuroscience, why not the rest of the humanities? Disciplines that relied on critical judgment and cultural immersion could be given a scientific gloss when rebranded as neuroethics, neuromusicology, or neuroarthistory (the subject of a book, believe it or not, by John Onians).2

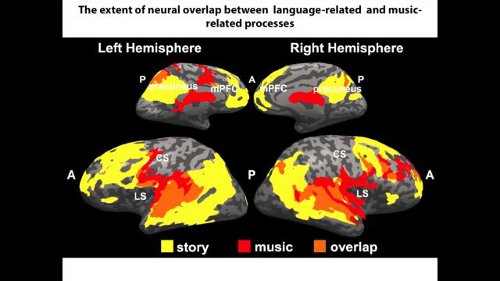

The idea that a neurological explanation could exhaust the meaning of experience was already being mocked as “medical materialism” by the psychologist William James a century ago.3 And in The Invisible Gorilla (2010), Christopher Chabris and Daniel Simons advise readers to be wary of such “brain porn”. But popular magazines, science websites and books are frenzied consumers of- and proselytisers for these scans. “This is your brain on music,” announces a caption to a set of fMRI images, and we are invited to conclude that we now understand more about the experience of listening to music. The genre is inexhaustible: “This is your brain on poker,” “This is your brain on metaphor,” “This is your brain on diet soda,” “This is your brain on God” and so on. The attempt to explain, through snazzy brain-imaging studies, not only how thoughts and emotions function, but how politics and religion work, and what the correct answers are to age-old philosophical controversies is nothing less than an intellectual plague of neuroscientism. For years, the uninformed public has been deluged by references to innumerable studies that “explain” the most complex, subtle and ethereal phenomena on the basis of some color-drenched picture of a sliced brain. The accompanying report, which purports to explain why human beings love, or envy, or believe in God, or prefer Coke to Pepsi, is heavy on neuro-babble. This is reductionist science run amok. The ubiquity of headlines containing phrases like brain scans show is matched only by the confusion they create in the minds of the public. So let’s revise some basics.

The human brain is, so far as we know, the most complex object in the universe. That a part of it “lights up” on a functional magnetic resonance imaging (fMRI) scan does not mean that the rest is inactive; it means that certain areas in the brain have an elevated oxygen consumption when a subject performs a task such as reading or reacting to stimuli such as pictures or sounds. The significance of this is not necessarily obvious. Technicolor brain scans are not anything remotely like photographs of the brain in action in real time.

Brain imaging is ubiquitous in pop science mostly because the images are media-friendly. The technology lulls the masses into thinking that the most complex entities and phenomena are reducible to simple images on a screen, a perfect fit for a generation hooked on iGadgets. Pretty pictures of the brain can seduce us into drawing simplistic conclusions, leading us to ask more of these images than they can possibly deliver. And even if brain scans were reliable indicators of brain activity, it is not straightforward to infer general lessons about life from experiments conducted under highly artificial conditions. (And of course, let’s remember that we do not have the faintest clue about the biggest mystery of all – how a lump of grey matter produces the conscious experience we take for granted.)

Paul Fletcher, Professor of health neuroscience at Cambridge University, says that he gets “exasperated” by much popular coverage of neuro-imaging research, which assumes that “activity in a brain region is the answer to some profound question about psychological processes. This is very hard to justify given how little we currently know about what different regions of the brain actually do.” Too often, he says, a popular writer will “opt for some sort of neuro-flapdoodle in which a highly simplistic and questionable point is accompanied by a suitably grand-sounding neural term and thus acquires a weightiness that it really doesn’t deserve. In my view, this is no different to some mountebank selling quacksalve by talking about the physics of water molecules’ memories, or a beautician talking about action liposomes.”

When the media conjure up stories with titles like “Brain Scans Show Vegetarians and Vegans More Empathic than Omnivores,” the content is almost entirely fictitious. It would be somewhat amusing if not for the fact that the masses out there take this as Science – magisterial, peremptory, authoritative.

In psychiatry, neurology and psychology, just about any conclusion is possible if you pick your evidence carefully.

Examples of this pop-science abound. Marketing consultant Martin Lindstrom tells us that people “love” their iPhones. This conclusion is based on the fact that brain scans of telephone users listening to their personal ring tones showed a “flurry of activation” in the insula, a prune-sized area of the brain. But researchers at UCLA claimed that photos of former presidential candidate John Edwards provoked feelings of “disgust” in subjects because they lit up the… insula. Is dopamine “the molecule of intuition”, as Jonah Lehrer suggested in The Decisive Moment (2009), or is it the basis of “the neural highway that’s responsible for generating the pleasurable emotions”, as he wrote in Imagine (2012)? Susan Cain’s Quiet: the Power of Introverts in a World That Can’t Stop Talking (2012), meanwhile, calls dopamine the “reward chemical” and postulates that extroverts are more responsive to it. Other stars of the pop literature are the hormone oxytocin (the “love chemical”) and mirror neurons, which allegedly explain empathy.

Just about any conclusion in science – but especially in psychiatry, neurology and psychology - is possible, if you pick your evidence carefully. “Having outlined your theory,” says Professor Fletcher, “you can then cite a finding from a neuro-imaging study identifying, for example, activity in a brain region such as the insula... You then select from among the many theories of insula function, choosing the one that best fits with your overall hypothesis, but neglecting to mention that nobody really knows what the insula does or that there are many ideas about its possible function.” The insula plays a role in a broad range of psychological experiences, including empathy and disgust, but also sudden insight, uncertainty, and the awareness of bodily sensations, such as pain, hunger, and thirst. With such a broad physiological portfolio, it is no surprise that the insula is activated in many fMRI studies.

If human beings are no more than a collection of biochemical responses responsibility, beauty and altruism go out of the window.

Even more versatile than the insula is the infamous amygdala. Invariably described as “primitive” or even “reptilian”, the amygdala shows increased activation when one experiences fear, but it also springs to life when one encounters novel or unexpected stimuli. (In The Republican Brain, Chris Mooney suggests that “conservatives and authoritarians” might be the nasty way they are because they have a “more active amygdala”.) The multi-functionality of most brain areas renders reasoning backwards from neural activation depicted by a scan to the subjective experience of the brain’s owner a dubious strategy. This approach – formally referred to as “reverse inference,” – is nothing but a high-tech and expensive Rorschach test, inviting interpreters to read whatever they wish into ambiguous findings. There is strong evidence for the amygdala’s role in fear, but then fear is one of the most heavily studied emotions; popularisers downplay or ignore the amygdala’s associations with the cuddlier emotions and memory.

One general lesson to take from all of this is that, notwithstanding the hype, results in science often mask an abyss of ignorance which, nonetheless, can be successfully marketed to an unsuspecting public. But, more importantly, a dangerous consequence of neuro-nonsense is its implicit tendency to abolish human responsibility. Take, for example, how addiction is often discussed nowadays in pop culture. We’ve known for ages that smoking, drinking, snorting cocaine and watching pornography can be habit forming. When neuroscientists say that such activities are associated with increased dopamine levels, this is treated as some breakthrough in understanding addiction. One such article4 tells us that

In the past, addiction was thought to be a weakness of character, but in recent decades research has increasingly found that addiction to drugs like cocaine, heroin and methamphetamine is a matter of brain chemistry.

Well, consider this:

In the past, the power of The Grapes of Wrath was thought to involve plot, characterisation, composition and so forth, but in recent decades research has found that it is a matter of chemical interactions between ink and paper.5

The philosopher Roger Scruton summarises the danger well:

Michael Gazzaniga’s influential study, The Ethical Brain, of 2005, has given rise to ‘Law and Neuroscience’ as an academic discipline, combining legal reasoning and brain imaging, largely to the detriment of our old ideas of responsibility... It seems to me that aesthetics, criticism, musicology and law are real disciplines, but not sciences. They are not concerned with explaining some aspect of the human condition but with understanding it, according to its own internal procedures... Brain imaging won’t help you to analyse Bach’s Art of Fugue or to interpret King Lear any more than it will unravel the concept of legal responsibility... The invention of ‘neurolaw’ is, it seems to me, profoundly dangerous, since it cannot fail to abolish freedom and accountability – not because those things don’t exist, but because they will never crop up in a brain scan.

The plague of neuro-nonsense is part of a much broader trend in Western science. It is the almost-inevitable culmination of the march to reductionism over the past 300 years. Reductionism almost invariably goes hand-in-hand with materialism, the nothing-but-molecules-in-motion philosophy which got such a boost from Darwin’s Origin of Species. If evolutionary biology is right, the ineluctable conclusion is that human beings are no more than a collection of biochemical responses to stimuli and neuronal interactions. Responsibility and justice, beauty and altruism, discipline and empathy then all go out of the window. When materialist reductionism runs riot, we all lose.

1 Francis Crick, The Astonishing Hypothesis: The Scientific Search for the Soul, 1994, A Touchstone Book published by Simon and Schuster, page 3.

2 http://www.spectator.co.uk/features/7714533/brain-drain/

Retrieved 18th May 2014.

3 http://www.newstatesman.com/culture/books/2012/09/your-brain-pseudoscience-rise-popular-neurobollocks

Retrieved 18th May 2014.

4 http://bigthink.com/going-mental/your-brain-on-drugs-dopamine-and-addiction

Retrieved 18th May 2014.

5 http://edwardfeser.blogspot.com/2012/03/scruton-on-neuroenvy.html#more

Retrieved 18th May 2014.